My last post, How to Achieve 20 Gb & 30 Gb Bandwidth through Network Bonding, was our most popular blog entry yet. There was a LOT of discussion and comments about the results of my testing, as well as network bonding in general (including here) – which was awesome to see. It’s great when people care about what you write!

One prevalent comment was that our first experiments in network bonding didn’t go far enough, that we didn’t move real files to and from large RAIDs across the network.

And so, here is my follow-up post. In this set of experiments, I will attempt to saturate a 20Gbit network with two different bonding modes using real data files across the network using a samba share on a Storinator storage pod. The goal is to show that any user, from small video producers to a large enterprise corporation, can achieve incredibly fast and reliable throughput from their storage server using 10GbE and bonding.

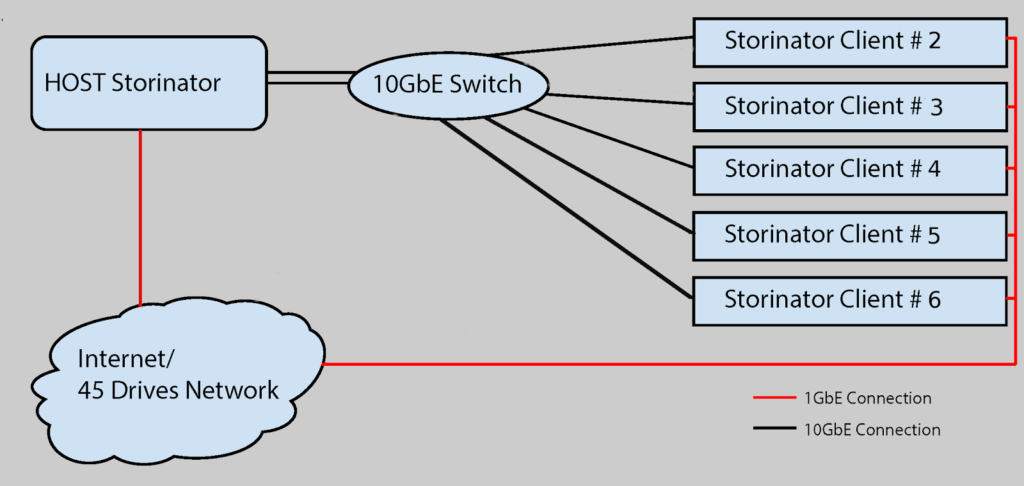

Our Setup in the 45 Drives Lab

In my last post, we used a Host Storinator and three Storinator clients, configured to our Netgear XS708E Unmanaged Switch (using Cat 6 network cables). I managed to successfully string together a 20 and 30 GbE network, however did not do any real file transfers. So for this post, I’m using a samba share to actually move some files around the network.

For this experiment, our Netgear XS708E switch has been filled with 5 Storinator clients (a mix of our models and configurations) and a single host Storinator. The host server has been configured with bonded NICs using the balance round-robin policy, theoretically allowing up to 20gbit connectivity. Each of the clients is connected via Cat 6 cable to a single 10GbE NIC, while the Host Server is connected with two Cat 6 cables and 2 Intel NIC’s. (You can see detailed configurations of each machine below.)

On my last blog post, one commenter pointed out that, “Balance Round-robin is transmit-balancing only.” This is correct – our network is only 20 Gigabit when leaving the host (i.e. reading from it). Going the other way, when writing to the host from the client, we should see that we are limited to 10 Gigabit, as a result of the bond policy used. I will verify this to be true, as well as investigate a different bonding policy that allows both transmit and receive load balancing, theoretically allowing 20 gigabit in both directions (reading and writing).

The following configurations were used in the experiment:

Host Storinator

• S45 Enhanced

• Supermicro X9SRL-F Motherboard

• Intel Xeon 2620 v2 2.10GHz Processor

• 2 Intel X540-T2 10GbE Network Interface Controller

• 32 GB DDDR3 ECC RAM

• 16 120GB SSD RAID0

• LSI 9201 HBA

• CentOS 7 MATE

Storinator Client 2

• S45 Base

• Supermicro X9SCM-F Motherboard

• Intel Xeon E3 1275 3.5 GHz Processor

• 32 GB DDR3 ECC RAM

• Intel X540-T2 10GbE Network Interface Controller

• 15 Drive RAID0 6TB WD Re Enterprise HDD’s

• LSI 9201 HBA

• CentOS 7 MATE

Storinator Client 3

• S45 Enhanced

• Supermicro X9SRL-F Motherboard

• Intel Xeon E5 2620 v2 2.10GHz Processor

• Intel X540-T2 10GbE Network Interface Controller

• 32 GB DDR3 ECC RAM

• 15 Drive RAID0 1TB Seagate Enterprise HDD’s

• Rocket 750 HBA

• CentOS 7 MATE

Storinator Client 4

• S45 Turbo

• Supermicro X10DRL-I Motherboard

• 2 Intel Xeon 2620 v3 2.10GHz Processor

• Intel X540-T2 10GbE Network Interface Controller

• 32 GB DDR4 ECC RAM

• 15 Drive RAID0 6TB WD Re Enterprise HDD’s

• Rocket 750 HBA

• CentOS 7 MATE

Storinator Client 5

• Storinator Q30

• Supermicro X9SCM-F Motherboard

• Intel Core i3 2100 3.10 GHz Processor

• Chelsio T40-BT Network Interface Controller

• 8 GB DDR3 ECC RAM

• 15 Drive RAID0 1TB Seagate Enterprise HDD’s

• Rocket 750 HBA

• CentOS 7 MATE

Storinator Client 6

• Storinator Q30

• Supermicro X9SCM-F Motherboard

• Intel Xeon E3 1275 3.5 GHz Processor

• Intel X540-T2 Network Interface Controller

• 16 GB DDR3 ECC RAM

• 26 Drive RAID0 6TB WD Ae Enterprise HDD’s

• LSI 9201 HBA

• CentOS 7 MATE

The Experiment

All clients were connected to a samba share on the bonded Host Storage Server running CentOS 7.

A 400 GB file containing video clips of various sizes was transferred to each client from the host at approximately the same time (within seconds). Theoretically, the host can read files at 2.5 GB/s. This was verified locally on the host using iozone, a file system benchmark utility. Also, 15 Drive RAID0 on each client can be written to at over 700MB/s (For production environments, you may not want to use striped arrays – a much larger array of HDDs in a RAID6 configuration will give you similar performance). So, with 5 clients pulling the file at the same time, we should be saturating our 20 Gigabit Network!

For the second part of the experiment, I went the other way, and wrote the 400GB file from each client to the host. Due to the fact that our bonding mode is transmit-balancing only, I should see a sum of transfer rates about 10Gigabits/second.

Finally, to see if we could achieve 20GbE in both directions, I switched the bonding mode to Adaptive load balancing, and ran the same file transfer experiment.

The Results

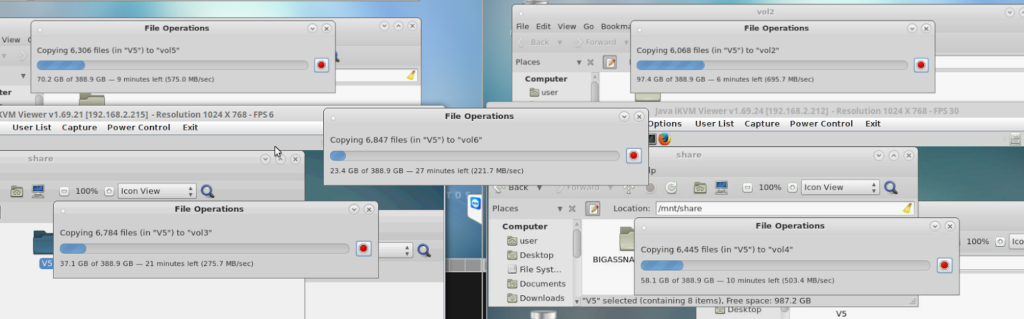

This image shows the file operations of all 5 clients reading from the host a few minutes after the file transfer started.

When summing the transfer speeds, I get 2,271.5 MB/s which converts to 18.2 Gigabits/s. Considering TCP overhead, the 20 GbE pipe is completely full!

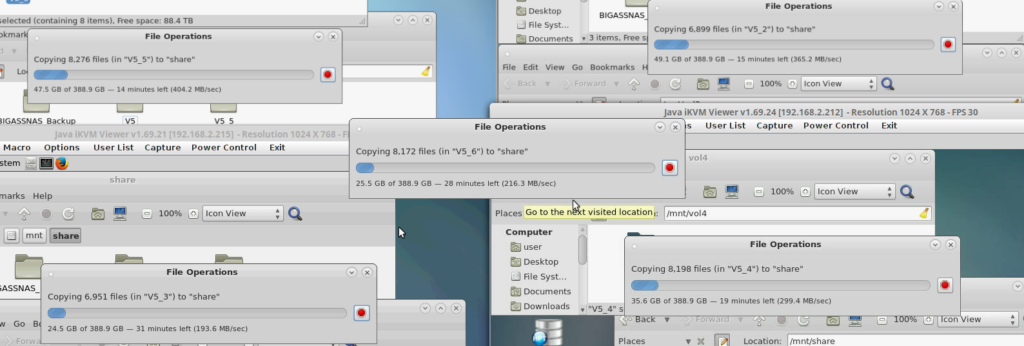

The next image shows the file operations of all 5 clients writing to the Host Storage Server.

When summing the transfer speeds, I get 1,478.8MB/s which converts to 11.830 Gigabits/second.

This speed is significantly less than the 18.2 Gbit/s I saw in the reads. I am confident this is because we are using a bonding mode that is transmit-balancing only.

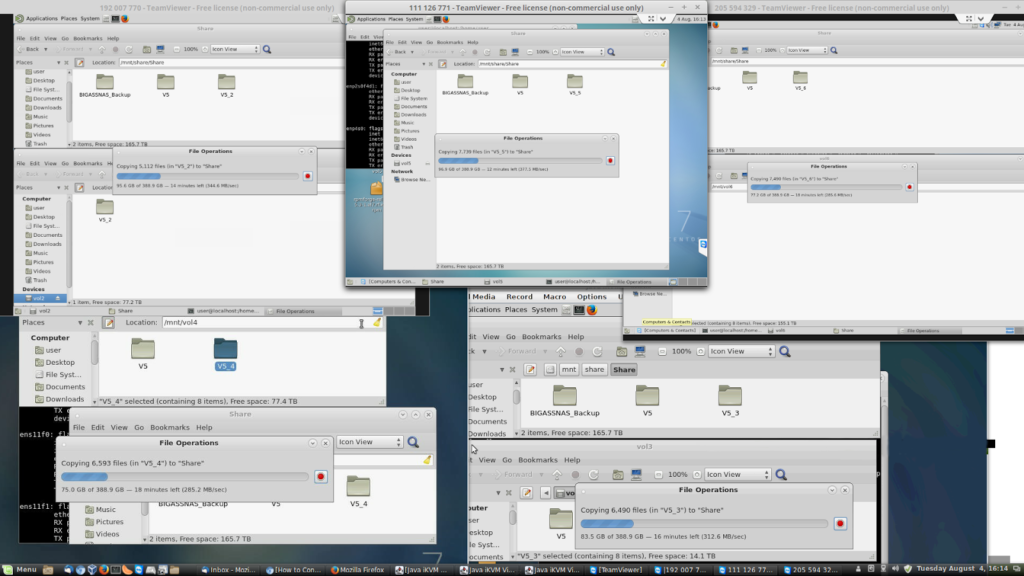

After switching the bonding mode to Adaptive load balancing, and running the same file transfer experiment the reads (5 clients reading from host) were unchanged at 18.2 Gigabits/s. I expected this, as it transmits similarly to balance round-robin.

On the other hand, the writes (specifically, 5 clients writing to the host Storinator), did not do what I expected.

(Well, I didn’t really know what to expect. But I was hoping for 20 GbE!).

The sum of the file transfers was 12.844Gigabits/s, unfortunately not the 20 Gigabit I expected. However, there does seem to be some load balancing going on. I played with some parameters in the bonding config file, including up-delay, since documentation online said that the up-delay must be greater than or equal to the switches forwarding delay or performance will suffer.

But, in the end, 12.844Gigabits/s was the best I could get.

Conclusion

Through my experiments, here’s what I determined:

The next step to load-balance inbound traffic is to move to a managed switch. I don’t have one on hand at the moment, but once I get one, I hope to do another set of experiments to see if I can achieve inbound transfer speeds that match outbound. Stay tuned!

Still, I’m impressed to have achieved real file transfer from a server, to several clients at 20Gigabit/s, over an unmanaged switch that costs less than $1,000. That configuration matches many real world situations.

Have an opinion about this network bonding experiment, or an idea for another test you’d like to see from the 45 Drives lab team? Let us know your thoughts in the comments.

Newsletter Signup

Sign up to be the first to know about new blog posts and other technical resources