We here at 45Drives really, really love Ceph. It is our go-to choice for storage clustering (creating a single storage system by linking multiple servers over a network). Ceph offers a robust feature set of native tools that constantly come in handy with routine tasks or specialized challenges you may run into. Ceph is a well-established, production-ready, and open-source clustering solution. If you are curious about using Ceph to store your data, 45Drives can help guide your team through the entire process.

As mentioned, Ceph has a great, native feature-set that can easily handle most tasks. However, in our experience deploying Ceph systems for a wide variety of use cases, we noticed a deficiency for one somewhat important function that we kept running into. CephFS lacked an efficient unidirectional backup daemon. Or in other words, there was no native tool in Ceph for sending a massive amount of data to another system.

What lead us to create Ceph Geo Replication?

Flash back a few years, and we were not selling storage clusters built on Ceph. When our engineering team was acquainting themselves with different open-source software clustering solutions, the original choice was Gluster. We sold and supported Gluster systems for a while, but eventually, we ran into some issues architecturally that prompted us to make the switch to Ceph.

Since then, Ceph has been fantastic for our customers and for us, providing almost everything we needed from storage clustering software. However, it lacked a tool Gluster had called “Geo Replication”.

from Gluster docs

The continuous, asynchronous backup that Gluster Geo Replication provided was excellent for disaster recovery use cases (among others). We found ourselves wishing for a similar tool in Ceph.

Natively, CephFS lacked any tools that could effectively mimic the function of GlusterFS’s Geo Replication. Ceph users could use rsync, but it was not an efficient means of incrementally backing up your data. This is because rsync would need to check every single file in the directory tree, across the network with its backup copy, to see if it has been modified. As the network latency or file count increases, this will greatly slow down the process.

What is Ceph Geo Replication? How does it work?

Ceph has some unique stats that no other filesystems give you. To build Ceph Geo Replication, we leveraged one of these directory attributes: rctime.

rctime is the highest modification time of all files below the given directory. By using this attribute, Ceph Geo Replication can check the directory tree through checking rctime against the last known modification time of the previous backup, saving the time that would otherwise be wasted checking unmodified files.

All of this will only speed up the initial gathering of files that need to be sent. However, Ceph Geo Replication has the capability to launch multiple concurrent rsync processes to greatly reduce transfer time.

All of this comes together to give you an application that can efficiently send huge amounts of data over a network.

Geo-Replication Performance Testing

To demonstrate the capabilities of Ceph Geo Replication, we preformed some tests. Both tests were performed 3 times and then had the average taken for the results.

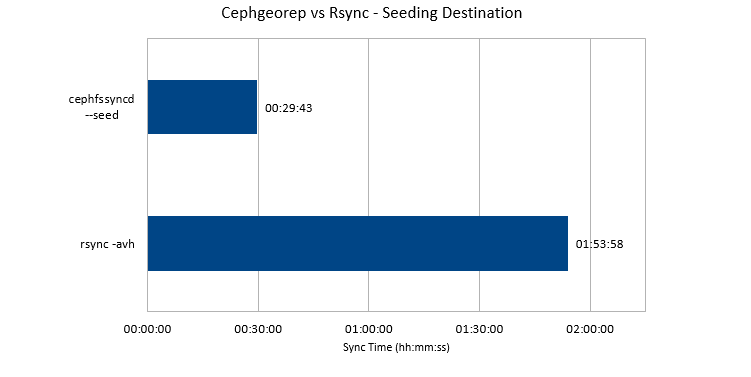

For the first test, we measured how long it would take to move a whole set of data with rsync vs. cephgeorep. The purpose of the seed time comparison test was to demonstrate the benefit of using multiple threads in parallel while transferring.

For the seed time comparison, we set up a directory tree that is 5 levels deep, with 3 subdirectories per directory (363 total directories). 500 files were randomly placed in this directory tree at 1GB each. This entire structure was then synced to the remote backup with rsync then with cephgeorep to compare the sync times. When moving the data set, cephgeorep was on average 4 times faster.

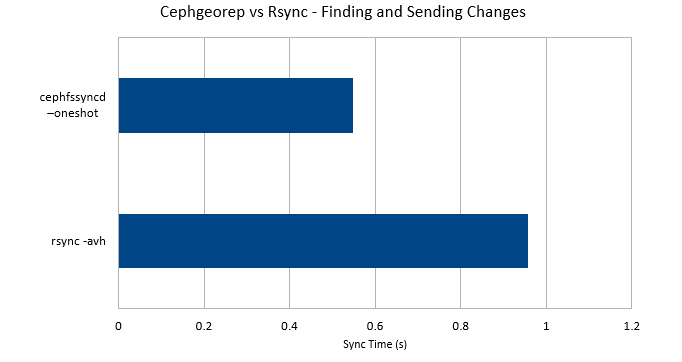

For the second test we preformed, we set up a directory tree that is 6 levels deep with 5 subdirectories per directory (19,530 total directories). 10,000 files were randomly placed in this directory tree at 0 bytes each. The backup was seeded. Then one file deep in the directory structure was modified. rsync and cephgeorep were both timed to find this file and send the changes to the back up.

This test compared how long it would take to find and send a file with cephgeorep vs rsync. On average, cephgeorep was 2x faster than rysnc. This test proved the method geo rep used to search the filesystem tree is superior.

How to install Ceph Geo Replication?

Ceph Geo Replication can be used by anyone with a Ceph cluster, whether they are using 45Drives hardware or not. If you wish to install cephgeorep, check out our github page for instructions.

If you are curious about how to leverage Ceph for your organizations data storage, contact us today about a free webinar.

Newsletter Signup

Sign up to be the first to know about new blog posts and other technical resources